On September 26, 1983, Stanislav Petrov, a lieutenant colonel in the Soviet Air Defense Forces, watched his computer screen glow with a terrifying warning: five American nuclear missiles were headed for the USSR. Humanity survived because Petrov famously reasoned that “when people start a war, they don’t start it with only five missiles.”, and he chose to break protocol and report the incident as a system malfunction rather than an incoming assault. Further investigation uncovered that the “attack” was a software hallucination that mistook the sun’s reflection off high-altitude clouds for the thermal signatures of incoming nuclear warheads.

Fast-forward to today: while the stakes may not always involve nuclear war, organizations are adopting complex agentic AI architectures that lack the structural integrity to handle real-world operational pressure. Recent research examining 1,642 executions across seven popular multi-agent system (MAS) frameworks revealed staggering failure rates between 41% and 86%. Despite advancements in LLMs, whether a multi-agent system works as intended is currently little more than a coin flip

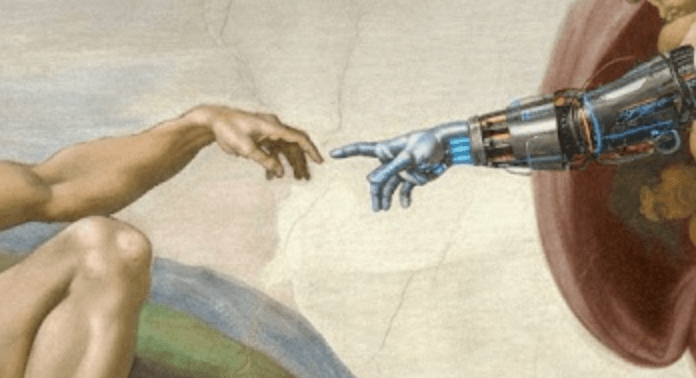

The paper “Towards a Declarative Agentic Layer for Intelligent Agents” introduces DALIA, a blueprint for the architectural layer necessary to defend agents against these hallucinations. Here are the key takeaways for building reliable AI.

1. The Failure of Inferred Capability

Most agents today rely on “linguistic inference,” where they guess what a tool can do based on its name or description. DALIA replaces this with a Capability Semantic Model, where a tool’s functions, preconditions, and postconditions are explicitly defined before an agent interacts with them.

- Example: You ask an AI agent to “Book a hotel in New York.” The agent sees a tool called hotel_provider and assumes it can handle the booking. It tries to run it, only to realize halfway through that it lacks a GuestID parameter or that the tool is actually only for searching, not booking. The process crashes.

- The DALIA Solution: Before acting, the system checks the semantic model for hotel.reserve. It “knows” exactly what it needs: a HotelID, CheckInDate, and a verified PaymentMethod. If those are not present, it identifies the gap immediately rather than guessing.

2. The Directory Approach

In complex business environments, a central registry is required to manage which agent is authorized to use specific resources. Current standards often give agents a list of tools with no context. DALIA introduces a Federated Agent Directory that maintains a global view of every agent’s role and access rights.

- Example: Imagine you have a “Travel Agent” and a “Payment Agent.” Without a directory, the Travel Agent might try to access a corporate credit card directly; a massive security and operational risk.

- The DALIA Solution: The Federated Directory acts as the authoritative registry, ensuring the Travel Agent can only “search” and “request,” while only the Payment Agent has the capability to finalize a transaction.

3. Stick to the Script

Many AI systems rely on iterative, free-form dialogue to coordinate, which often leads to “hallucinated actions” where agents convince themselves they have finished a task they never actually started. DALIA enforces Deterministic Orchestration by creating a rigid Task Graph. .

- The DALIA Solution: Agent A (Search) must produce a Hotel_List before Agent B (Booking) is permitted to execute a Payment. If an agent attempts to skip to booking without a verified HotelID, the “gates” of the task graph remain closed. The system moves based on logic and grounded operations, not conversational improvisation

For those deploying capital or building companies in this space, DALIA could represent the architectural requirement for reliable agent orchestration

- For Founders: Success is not only about building smarter models or products on smarter models; it is about building a service that the public can trust. Founders who build systems that are verifiable and reproducible will win enterprise contracts over those selling agents that might hallucinate a critical business process. Declare war against hallucinations.

- For Investors: Look for “red flags” in agentic startups that lack a clear architectural grounding strategy. If a team cannot explain how their agents operate consistently in real-world scenarios with competence beyond a blind trust in the model’s “intellect,” they are likely building a brittle system.

References:

https://www.youtube.com/watch?v=L7EmLf4Xlq0

Disclaimer: The views and opinions expressed in this article are strictly those of the author and do not reflect the official policy or position of any current or former employer. This content is provided for informational purposes only and does not constitute investment, financial, legal, or professional advice